Measuring entropy can be a useful heuristic when performing analysis. This is a short introduction into performing entropy analysis on binary and text files using F#.

What can entropy based analysis be used for? One specific use case is searching for secret keys in files. In particular, scanning for hardcoded secrets or passwords that are accidently committed to git. Scanning tools can be used to catch these kinds of issues. Another use case is scanning binaries for reverse engineering purposes. It is an interesting exercise to see what goes on behind the scenes with these types of tools. The ability to scan successfully is predicated on the keys being blocks of random characters (a fair assumption for good passwords). The randomness can be extracted from code files that have a certain level of predictability. At the core of this process is Information Theory, namely Shannon Entropy. If you want to follow along, you’ll need to get .NET Core installed. For visualizations, XPlot will provide the necessary functionality.

1 | dotnet new console -lang F# -n Entropy |

1 | open System |

$$ {H = - \sum^N_{i=1}p_i \log p_i} $$

The above equation is our starting point, where pi is the probability of seeing a particular character. Implementation of the Shannon Entropy calculation is deceptively simple. First, take a byte array and count the frequency of each byte value. Second, sum the probabilities of seeing each byte in the byte array times the Log of the respective probability. Here is where I diverge from the typical implementation. Discussion often focuses on Log2, since so many instances focus on bits. I prefer to work with a normalized value. For 256 possible values I use Log256 to scale between 0 and 1, where 0 is no entropy and 1 is high entropy.

1 | let NormalizedEntropy (data: byte[]) = |

Since one of the possible uses of entropy analysis is checking files for secrets, a simple example is looking at Program.fs. For comparison I copied the file and added a couple random passwords. Taking it a step further, I generated a fake.pem just to demonstrate how the entropy of code differs from pem files (something else that might want to be scanned for in code repos).

1 | [ "Program.fs" |

As the results show below, putting some randomized strings (like secret keys or passwords) in a code file increases it’s entropy. For comparison, the pem file is noticeably higher. Although this is interesting, it has its limitations for any type of real scanning. Perhaps I could scan all my code files to see if there is a typical range and use that to find anomalies, but I have a better idea.

1 | Program.fs - 0.60 |

Details can be lost by looking at a file as a whole. Another approach is to using a sliding window through the file. Additionally leverage a threshold to show any blocks of the the file that have a high entropy. A little refactoring for readability, the entropy calculation is broken out. Then a sliding window is applied against the file. Whenever a window is above the entropy threshold, show that block (at least the printable characters). To cut down on excessive printing, don’t show overlapping windows.

1 | /// Given a frequency array, calculate normalized entropy for a specified window size |

The file can then be evaluated by providing a window size and threshold. A window size of 50 bytes and threshold of 0.58 are mostly arbitrary, but some experimentation show these seem to get a reasonable result. I can now run the analysis against a code file with secrets and the pem file.

1 | [ "Program-Secret.fs" |

The results provide a bit more insight than before. The entire pem file is captured (as expected). For the code file, not all things caught are secrets. Natural codeblocks get caught up in the process, and that’s fine. This is about finding possibly areas of concern, and that goal is accomplished. This is useful approach, but not perfect. There are still gaps that can be closed to make it a more robust solution.

1 | File: Program-Secret.fs |

One question that remains is what are ideal values for window size and threshold that maximize finding secrets while minimizing false positives. One of the impacting factors for this is different languages have their own characteristics, impacting the best parameters. Beyond static parameters, statistical methods can be used to determine anomalies and parameters for a file, providing a more dynamic approach. In the spirit of investigating different angles, I ran analysis against some of my repos (using a window size of 50). My code generally has a range, but it appears some scanning refinement can be accomplished by taking file type into account.

1 | .c : 0.530 |

Because there are multiple ways to look at the data, it is time to go a bit more visual. The below adaptation returns a by-sliding-window entropy array. This array is then fed into a charting function. A chart provides a nice visual representation of the entropy over the entire file.

1 | /// Normalized entropy using a rolling window |

1 | [ "Program-Secret.fs" |

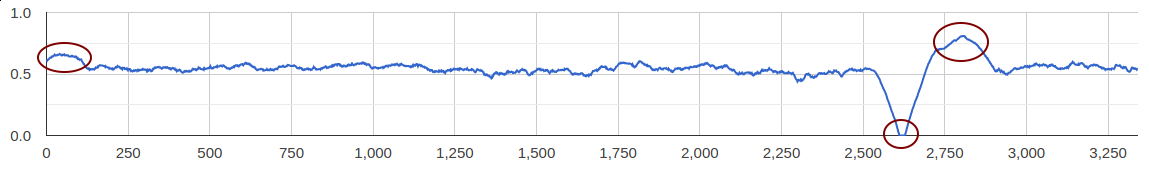

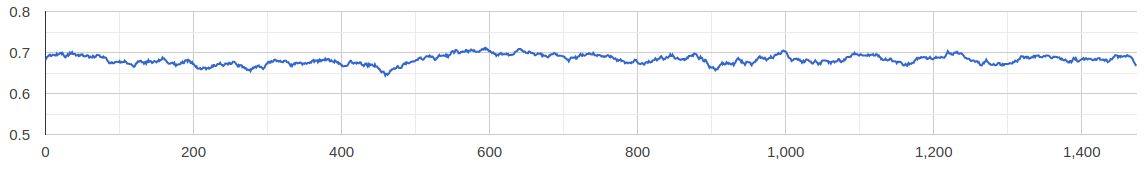

Now, as a result, I get charts showing entropy over the respective file. The bumps show where I placed some random string keys in the file. Just for kicks, I including a block of “AAAA…”, which is also evident when entropy drops to 0. As a contrast, the pem file has a pretty consistently higher entropy throughout the whole file. As with so many things, different angles on the problem help to improve intuition. Plus I’m a sucker for a good picture.

This has been a brief look into how entropy patterns can be of use when scanning files for interesting information. Until next time.